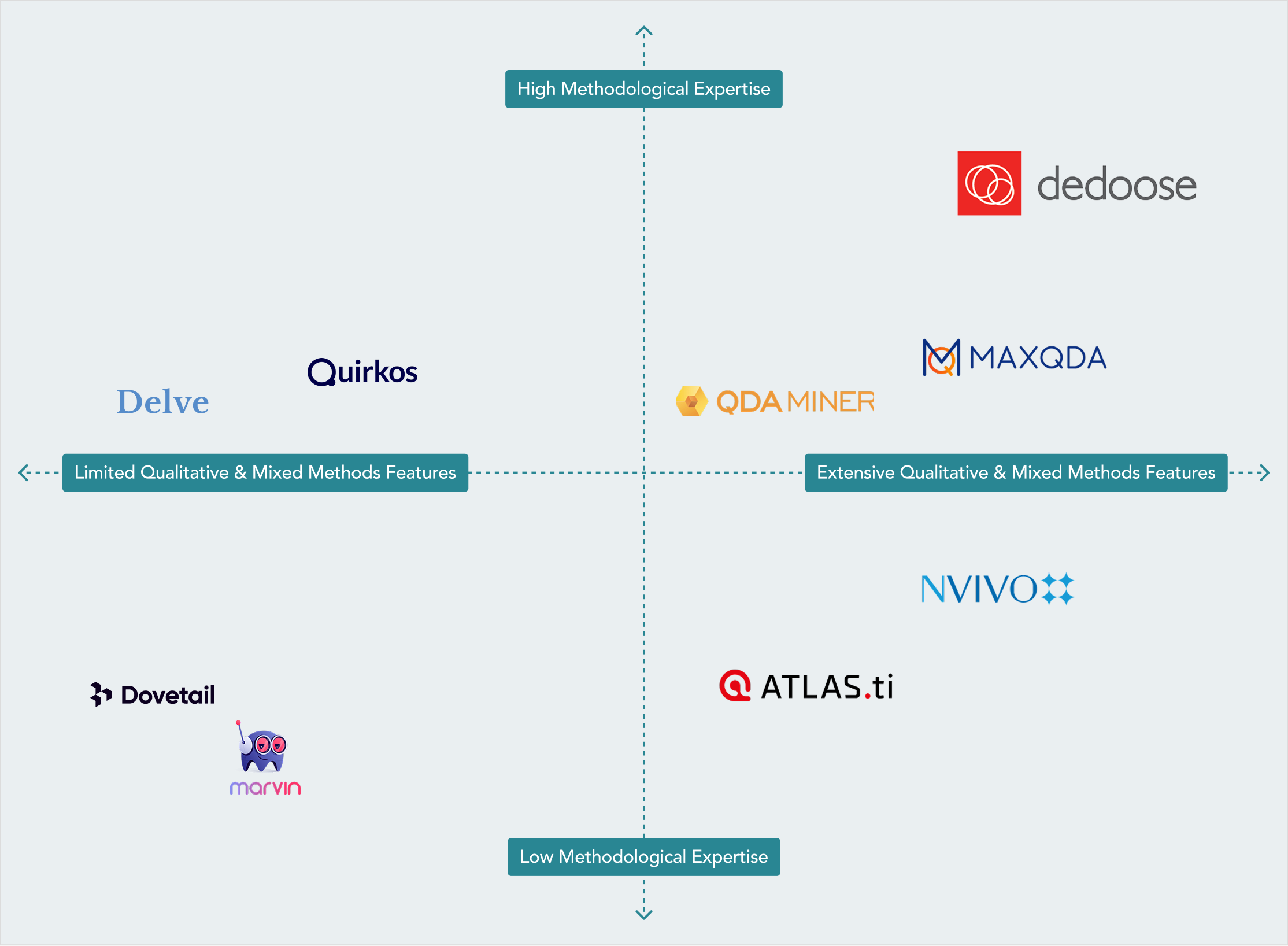

With that baseline established, here is how the other tools in this evaluation measured — and where the gaps are most consequential for researchers choosing among them.

NVivo and ATLAS.ti: Established Tools with Real Gaps

NVivo and ATLAS.ti tend to be the first tools graduate programs introduce to students, largely because of their institutional longevity and the volume of methodological literature that references them. That familiarity has real value — there’s a substantial body of published guidance on using both tools across different methodological traditions.

But familiarity shouldn’t be confused with effectiveness or comprehensiveness.

NVivo scores a 7 on features, which reflects a genuinely capable qualitative analysis environment. It handles complex coding structures, supports multiple data formats, and has reasonably sophisticated querying and visualization tools. Where it falls short is on quantitative integration and collaboration. Researchers doing mixed methods work often find themselves facing the cost of moving data in and out of NVivo to run analyses elsewhere, then manually reconciling qualitative and quantitative findings — a process that introduces both inefficiency and interpretive risk. The joint display and integration functionality required by serious mixed methods design is not an area where NVivo has focused development.

The support score of 3 reflects something many experienced NVivo users will recognize: when you run into trouble, you’re largely on your own. The documentation is extensive but oriented toward technical procedures rather than methodological guidance. Support response times are known to be slow, and there’s no plan type for the kind of substantive methodological consultation that complex research designs often require. If your question is “how do I perform this operation in the software?” NVivo’s documentation can usually get you there. If your question is “how should I structure this analytical phase given my theoretical framework?” you’ll need to look elsewhere.

ATLAS.ti

ATLAS.ti presents a different profile. Its feature score of 5 reflects solid qualitative capabilities — it handles coding, retrieval, and network visualization competently, and its interface has become more polished in recent versions. The meaningful gap is in mixed methods functionality, which ATLAS.ti has not developed in any substantive way. For researchers whose designs require integrating qualitative and quantitative data within a single analytical framework, ATLAS.ti requires significant workarounds or the use of supplemental tools. Its support score of 4 reflects decent documentation and some paid training options, but like NVivo, there is no mechanism for accessing genuine methodological expertise through the platform itself. It should also be noted that both tools are now owned by the same parent company, and the support offerings may naturally merge over time.

There is also a practical pricing concern with both NVivo and ATLAS.ti that doesn’t get enough attention: the available feature sets are not fully consistent across operating systems. Researchers working on Mac versus Windows may find that certain capabilities behave differently or are unavailable entirely depending on their platform. For teams where members work across different systems — common in academic and applied research environments — this introduces an additional and rarely discussed barrier. Moreover, real-time collaboration, where it exists at all, is treated as a premium addition, rather than a standard part of the product, meaning so teams that need to work together find themselves needing to pay more for a capability that should be foundational.

MAXQDA: The Closest Competitor

MAXQDA is the strongest competitor in this evaluation and deserves honest recognition. Its feature score of 8 reflects a platform that has invested seriously in mixed methods capability. The ‘Analytics Pro’ version of the tool includes statistical functions — ANOVA, t-tests, correlations — alongside its qualitative tools, has strong visualization options including joint displays, and supports the data linkages required for mixed methods integration. Researchers who have used both MAXQDA and NVivo for mixed methods work often note that MAXQDA’s qualitative and quantitative analysis integration feels more structurally coherent, not bolted on as an afterthought.

The gap opens on the expertise and support dimension, where MAXQDA scores a 5. The training resources are solid — regular webinars, methodologically aware documentation, an active user community — but are limited by their primary orientation toward learning the software and not supporting the kinds of methodological decisions that shape a study’s rigor in real-world settings. The support team is technically competent, but the infrastructure isn’t structured around project-based consultation or the kind of ongoing methodological engagement that researchers navigating complex designs often need. This is less a concern for researchers already confident in their methodological skills and simply seeking to adopt software for executing their work. However, for researchers who are still working through design questions or anticipate methodological challenges along the way, these limitations are more consequential.

On pricing, MAXQDA is a perpetual license product at a price point that reflects its feature depth — which means an upfront cost that can be significant for individual researchers, graduate students, or small teams without institutional funding. Like NVivo and ATLAS.ti, collaboration is not included as standard and carries additional cost as well as additional restraints on the number of collaborators. The cross-platform experience is more consistent than NVivo’s, but researchers should still verify that the specific capabilities their design requires are available on their operating system before committing.

Quirkos: Strong Support, Limited Scope

Quirkos scores a 4 on features and a 6 on expertise and support — essentially the inverse of the pattern seen in the larger tools — and that trade-off is worth further examination.

The support and documentation provided by Quirkos is genuinely sound and notably better than typically found from a tool at its feature level. Their blog and resource library are methodologically thoughtful, written by researchers who clearly understand qualitative research practice, and they address real questions researchers encounter rather than serving primarily as software tutorials. For a researcher doing focused qualitative work — interview analysis, document review — and who values having substantive guidance available, Quirkos can deliver real value.

The primary constraint with Quirkos is its analytical scope. Quirkos is designed for qualitative text coding and has not prioritized mixed methods integration. There’s no framework for linking qualitative findings to quantitative data, no statistical functionality, and limited support for the kinds of complex, multi-layered coding structures that more advanced qualitative approaches require. A researcher whose work stays comfortably within qualitative-only territory may find Quirkos serves them well. A researcher whose design evolves toward integration — as many do, particularly in applied research contexts — will need to expect the costs of migrating to a different tool.

Delve, Dovetail, and HeyMarvin: Built for a Different Purpose

These three tools share a profile: feature scores of 2, expertise scores between 2 and 5, and design philosophies oriented toward UX research and product teams rather than academic or applied social science. They’re built with tools for teams that need to synthesize user interviews quickly, tag feedback, and identify surface-level patterns to inform product decisions. That work is legitimate and valuable for limited scope of needs, but it operates under different standards and expectations than those demanded by peer-reviewed research or formal program evaluation.

Delve scores slightly higher on expertise, scoring a 5, because its support documentation is found to be well-written and reflects some genuine familiarity with research practice. But the overall feature set is simply not structured for many of the analytical demands researchers seek to address: no mixed methods integration, no multi-coder infrastructure with reliability tools, limited code management, and no statistical capability.

For researchers whose work must meet standards and expectations of dissertation committees, peer reviewers, IRBs, or funding agencies, none of these tools were designed to suffice. To be clear, this is a descriptive observation rather than criticism and they are effective in serving the needs of a different community. As such, researchers who find themselves drawn to these platforms because of their accessibility and clean interfaces should be aware about what they’re trading away in terms of analytical breadth and depth and the consequences for the ultimate impact of their findings.

A Note on AI-Assisted Tools

A growing number of tools are positioning AI, in the form of large language models, as a primary feature — automated coding suggestions, AI-generated theme summaries, machine-assisted pattern identification. This development is worth thinking about carefully on many levels and thus was not included in the grading criteria. In today’s environment, particularly for non-academic users, there is a growing use of AI in analysis of text-based data where the goal is a vague representation of the data they have rather than true analysis.

However, there are substantial methodological and epistemological concerns worthy of straightforward attention. The interpretive work at the heart of qualitative research — making meaning from data in a theoretically grounded, epistemologically coherent, context-sensitive way — is inherently a human-driven endevour that cannot be automated without fundamentally changing the definition and expectations of qualitative research. The value proposition of qualitative inquiry is situated in the human researcher, with their defined positionality, the theoretical framework employed, and accountability to the people whose experiences are represented in the data. These human factors are a hallmark of how comprehensive and contextually responsive interpretive decisions are made and how evidence can be extracted and presented to support and defend those findings. When such work is delegated to a machine, even partially, any interpretation of results must acknowledge—and at best explain—how the process has changed and any subsequent knowledge claims that have been affected.

This methodological concern is significant and clear, but there is a second set of concerns regarding the safe use of AI that researchers need to be pondering—and we’d argue that they are not being raised with sufficient alarm.

Data Privacy and Research Ethics

Without exception, the protection of human-subjects' data is of fundamental importance to researchers around the world. Qualitative research typically involves sensitive data and can include interviews with vulnerable populations, personally identifiable information, protected health data, confidential organizational information, or the private experiences of individuals who consented to participate in a research study. Properly sanctioned and evaluated research programs seek formal consent from participants with the promise that the data they provide will be protected and used only for approved research purposes by approved members of the research team not to have their responses processed by any third-party AI system. When research routes data through an external AI provider, those data necessarily leave the researcher-controlled environment, and their subsequent protection relies on the agreement between the two companies. Whether those data are retained, used for model training, or accessible to the provider’s systems for other purposes are questions that must be answered and most tools currently using AI integrations do not provide sufficient detail or clarity to meet standard IRB-level scrutiny and expectations.

This is not a hypothetical concern. The major AI providers whose models are embedded in many of these tools — including OpenAI, Anthropic, Amazon, and Google — have each faced serious and documented scrutiny over their data practices, training data provenance, and the terms under which user data is processed and potentially retained. Many of the models underlying these tools were trained on data acquired under contested circumstances, raising unresolved questions about intellectual property and consent that the research community has not yet fully grappled with. Researchers who are working under IRB protocols, HIPAA requirements, or data use agreements with funding agencies should be specifically and formally asking where their data travel when using an AI-integrated analysis tool — and verifying that the answer is consistent with the terms of their research agreements.

Data security has been a foundational principle for Dedoose since the platform was built. The researchers who founded Dedoose understood that qualitative data is not generic and sterile information — it is often among the most sensitive material researchers gather, because it captures the words, experiences, and lives of real people who trust the research process. That understanding shapes decisions about the Dedoose architecture, data handling, and what features to build—and not build. It is why Dedoose will not integrate AI in ways that would route participant data through external model providers. This decision reflects more than caution for the sake of the product, but to protect the nature of rigorous and proven research methods and investigator obligations to research ethics.

The social science research field is still working through if LLMs have a place in social science, what responsible LLM integration would look like, and we think this is worthy of an open conversation. As such, we believe that any of today’s tools currently leading with AI as a selling point are not promoting its use with appropriate consideration on the seriousness of the impact to the field and the quality of research findings. Researchers deserve to know what they’re agreeing to when they use these features — and right now, in most cases, there are many important questions that remain unanswered.

Choosing a Qualitative Analysis Tool: Key Questions to Consider

Rather than a simple recommendation, here are the questions worth consideration:

- What does your research design require? Be specific about whether you need mixed methods integration, statistical analysis within your qualitative environment, multi-coder infrastructure, or support for specific data formats. Many researchers discover mid-project that their initial tool choice didn’t account for what the design actually demanded.

- What is the real cost of using a tool for your research? Base licensing prices rarely tell the full story. Factor in whether collaboration is included or costs extra, whether the features you need are available on your operating system, whether different team members on different platforms will have access to the same capabilities, and whether the tool requires institutional infrastructure to function as advertised. Lower headline pricing can quickly become higher actual costs once those variables are accounted for.

- Where do you expect your research to go? The tool that fits your current project may not serve your needs in the future. If you’re early in your career, working across methodological traditions, or likely to expand into mixed methods, multimedia analysis, or larger team structures, it’s worth asking whether the tool you’re considering has room to grow with you or whether you’ll be needing to re-evaluate in again in two years.

- Who needs access to the project, and what will it cost to include them? If your research involves a multi-person team, cross-institutional collaborators, community partners, or faculty oversight, understand how each tool handles collaboration and the associated cost. Seat-based licensing and institutional access restrictions are common enough that they’re worth investigating before you commit to a platform.

- How confident are you in your methodology? If you’re working through design questions, making decisions about an analytical approach, or anticipating methodological challenges, the expertise available through your tool’s support infrastructure matters considerably. If you’re executing a well-established design with a clear roadmap, that dimension may be less pressing.

- What standards will your work be held to? Academic research, program evaluation, applied research in clinical or policy contexts, and research funded by funds from various sources will all operate under different forms of scrutiny. Tools designed for product research may not support the documentation, analytical depth, levels of security, or the methodological infrastructure those situations require.

- What happens when you get stuck? Every research project encounters points where the path forward isn’t clear. Understanding what support is available — and what kind of support — is a practical consideration worth making explicit before you’re in the middle of a problem.

A Final Note

Dedoose was built by researchers, for researchers. Rigorous research deserves purpose-built software, and that's what Dedoose delivers. Dedoose has always been about more than software; it is about supporting the researchers who ask hard questions, work with complex data, and pursue understanding in service of something larger. This evaluation reflects that same orientation — grounded in specific reasoning, honest about the competitive landscape, and focused on what genuinely matters for high-quality research practice. Social science research is important work, and the people doing it deserve tools built to support it with integrity.

Stay curious and good luck with your research!

The Dedoose Team