Article: Best Practices in Excerpting and Coding and Capitalizing on Dedoose Features

Tags

- All

- Training (4)

- Account Management and Security (9)

- Features of Dedoose (9)

- Dedoose Desktop App (1)

- Dedoose Upgrades and Updates (5)

- Dedoose News (6)

- Qualitative Methods and Data (11)

- Other (5)

- Media (5)

- Filtering (5)

- Descriptors (10)

- Analysis (22)

- Data Preparation and Management (20)

- Quantitative Methods and Data (5)

- Mixed Methods (20)

- Inter Rater Reliability (3)

- Codes (26)

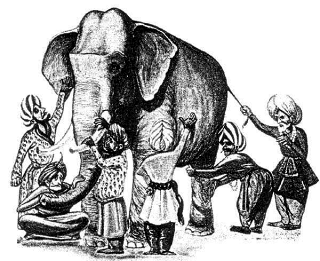

Summary—Context is King…Be a Chunker!

For Splitters, imagine doing a search and retrieval for commonly coded excerpts after using a splitting style. Results will get you many short excerpts completely out of context. Remember when you are creating excerpts you are viewing or listening to the entire media file, so the context is there and the broader meanings are clear when you are engaged in process. Unfortunately for splitters, when they later review excerpts out of context they often find themselves needing to return to the context to be reminded of the broader meanings…bummer, this can be a real time-sink. This is the primary reason we recommend a chunking style.

For Chunkers, two big benefits:

- 1—excerpts contain sufficient context to understand why you applied particular codes

- 2—many Dedoose analytic features, like the code co-occurrence matrix, are far more valuable when you are carrying out your analysis.

If you keep in mind that we collect and analyze qualitative data because of their richness and that smaller numbers of words carry far less meaning—particularly out of context—there is every reason in the world to join the Chunker community and embrace the valuable rich, deep, context in your data….for more, read on!

Excerpting, Coding, and Teamwork (and Inter-Rater Reliability)

Coding Blind to the Work of Collaborators

- Each user logs into Dedoose and, before accessing a media file, they filter out the work of others via the Data Set Workspace functions

- After filtering, when viewing a media file they only see the work they contributed and can carry on with their own excerpting and tagging activity without any distraction/contamination from the work of others

- Each user does their work in this manner on the same media files

- The full team can later view the media files with all work showing and can clearly identify any variation in excerpting and coding decisions.

With the information gained from the blind coding exercise, the team is then prepared to discuss excerpting and coding decision criteria and, most importantly, come to consensus on the excerpting style by which all team members should adopt moving forward. It is important to keep in mind that while the ‘style’ with which excerpting is carried out is not as critical to inter-rater reliability and validity as coding decisions, a relatively consistent style across team member can help support any conclusions that may be based on quantification of any excerpting/coding activity.

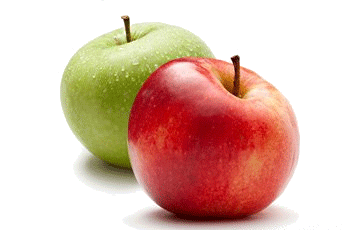

Using Document Cloning for ‘Apples to Apples’ Comparison

- One ‘trusted’ team member is assigned responsibility for creating, but not coding, excerpts in a media file

- The media file is then cloned and each cloned copy re-titled for each team member (for example doc 1person 1, doc 1person 2, …)

- Each team member then access their copy of any cloned media files and assigns codes to the existing excerpts

- From these copies there are a variety of ways to compare and contrast the coding decisions made by each team member and to continue, in more depth, the conversation about refining code application criteria toward a comfortable and shared understanding.

The Dedoose Training Center and Testing for Inter-Rater Reliability

Coding blind and independent coding using the cloning feature can continue in an iterative manner until such time when the team feels confident in the overall structure of the coding system and their ability to independently apply codes in a consistent manner. At this point, the team may then wish to more formally assess inter-rater reliability via the Dedoose Training Center (Dedoose 7.5.16, 2017). To make use of the training tests:

- One or more team members create and code excerpts within project media files to comprehensively represent variation in the sample data and the meanings to be identified and tagged with codes in the code system

- Training Center tests are created by selecting a set of codes on which a test should focus and selecting representative excerpts from the master project (as created in step 1)

- Other members of the team, including those involved in the initial test excerpt creation, then take the tests in which they are presented with the set of codes assigned to the test and the excerpt content

- Test takers see the excerpt content and are responsible for selecting the appropriate codes for each excerpt in the test

- Results include Cohen’s Kappa coefficient for each code, a pooled Kappa for the full set of codes, and detailed information showing each excerpt’s content, the codes applied in the master project, and the codes selected by the test taker.

Where test results show acceptable levels of inter-rater reliability, the team then vocalizes their delight, share ‘high fives’ around the room, and takes the rest of the day off for cocktails and canapes at PI’s expense. However, when test results are less that acceptable, the team then proceeds to review the available information and continue the very important discussion about how the code system and code application criteria are defined as they continue working toward the shared understanding critical to both team confidence and the confidence they will be prepared to instill in the consumers of their work.

References

Dedoose, 7.5.16 (2017). Inter-rater Reliability: https://www.dedoose.com/userguide/interraterreliability/trainingcenterarticle#TrainingCenterArticle SocioCultural Research Consultants, LLC (Dedoose.com).

Dey, I. (1993). Qualitative data analysis: A user-friendly guide for social scientists. London, UK: Routledge Kegan Paul.

Jehn, K. A. & Doucet, L. (1996). Developing categories from interview data: Text analysis and multidimensional scaling. Part 1. Cultural Anthropology Methods Journal 8(2), 15–16.

Jehn, K. A. & Doucet, L. (1997). Developing categories for interview data: Consequences of different coding and analysis strategies in understanding text. Part 2. Cultural Anthropology Methods Journal 9(1), 1–7. Ryan, G. W. & Bernard, H. R. (2003). Techniques to identify themes. Field Methods, 15(1), 85-109.